Lead UX Designer │ Optimasi.ai │ Indonesia

Lead UX Designer │ Optimasi.ai │ Indonesia

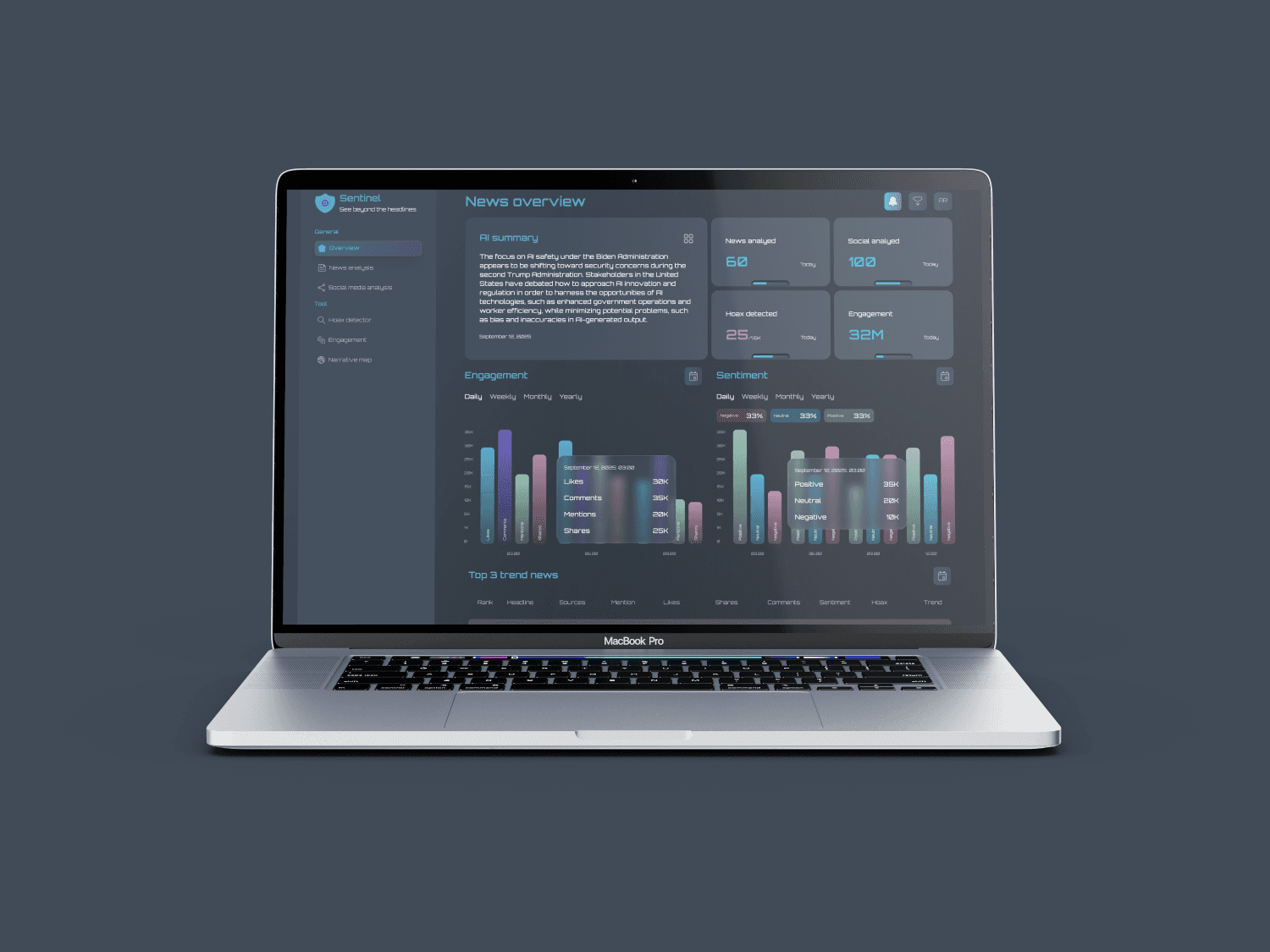

News monitor dashboard

News monitor dashboard

News monitor dashboard

See beyond the headlines

See beyond the headlines

Introduction – The problem space

Introduction – The problem space

Set the context with today’s reality:

01

01

The flow of news and opinions on digital media is accelerating, both on national news portals and social media platforms like X

The flow of news and opinions on digital media is accelerating, both on national news portals and social media platforms like X

02

02

Distinguishing between credible information and potential hoaxes is increasingly difficult

Distinguishing between credible information and potential hoaxes is increasingly difficult

03

03

At the same time, users want to know the impact of news: engagement levels, hoax , and public sentiment

At the same time, users want to know the impact of news: engagement levels, hoax , and public sentiment

In an era of information overload, how can we evaluate the credibility of news, understand public sentiment, and track its real-time impact?

In an era of information overload, how can we evaluate the credibility of news, understand public sentiment, and track its real-time impact?

Opportunity

Opportunity

Why this solution matters:

Sentinel serves as a futuristic dashboard that helps users instantly assess news sentiment, credibility, and impact with clarity and confidence

Sentinel serves as a futuristic dashboard that helps users instantly assess news sentiment, credibility, and impact with clarity and confidence

User need

Sentiment analysis, hoax risk detection, reach, and engagement breakdown

Sentiment analysis, hoax risk detection, reach, and engagement breakdown

Benchmarking

Existing tools (e.g., Brandwatch, Talkwalker) are strong in analytics but weak in credibility checks

Existing tools (e.g., Brandwatch, Talkwalker) are strong in analytics but weak in credibility checks

Local context

Initial scope focuses on Indonesian national news portals and social media (X) for relevance

Initial scope focuses on Indonesian national news portals and social media (X) for relevance

Key pages

Key pages

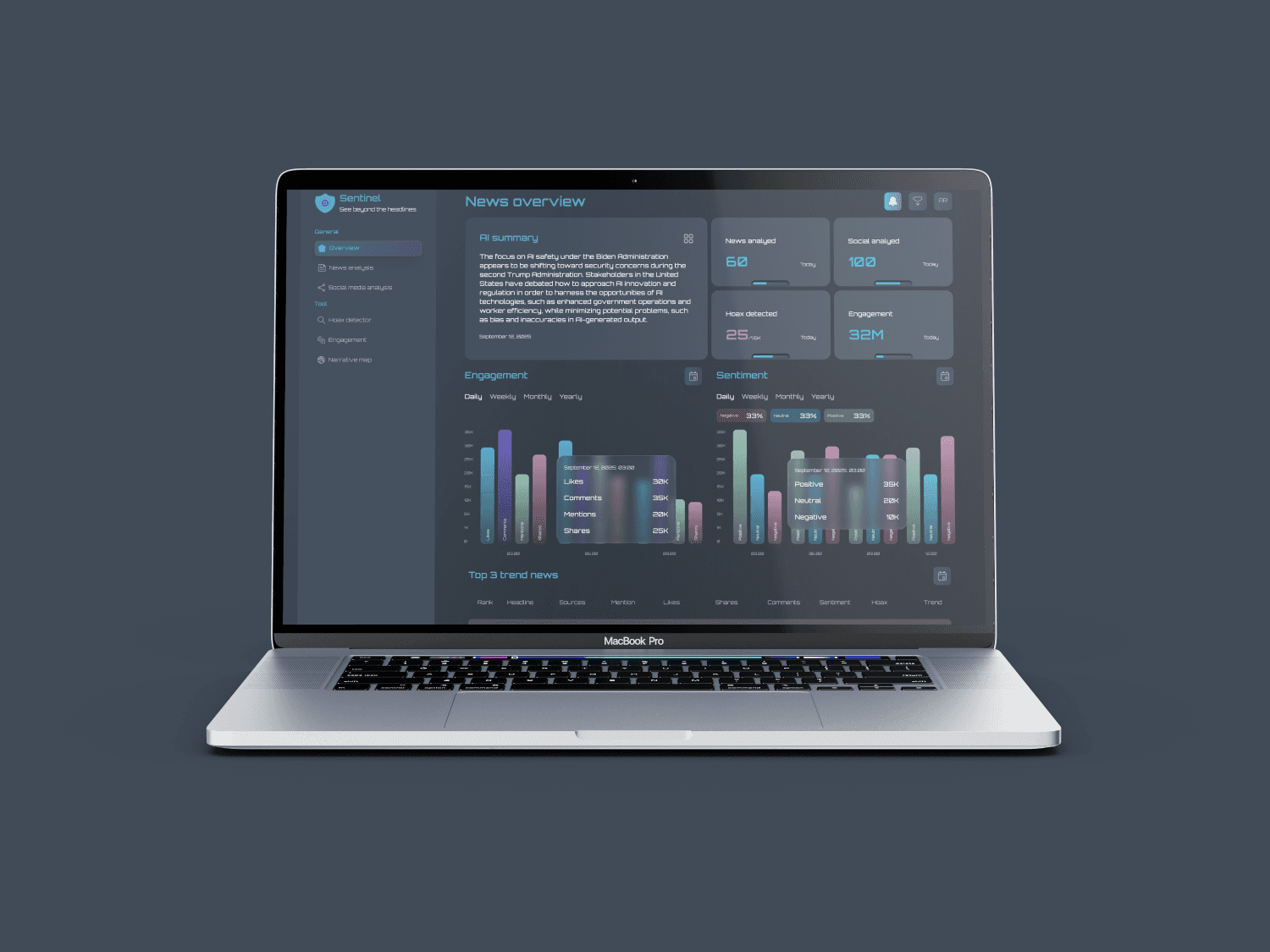

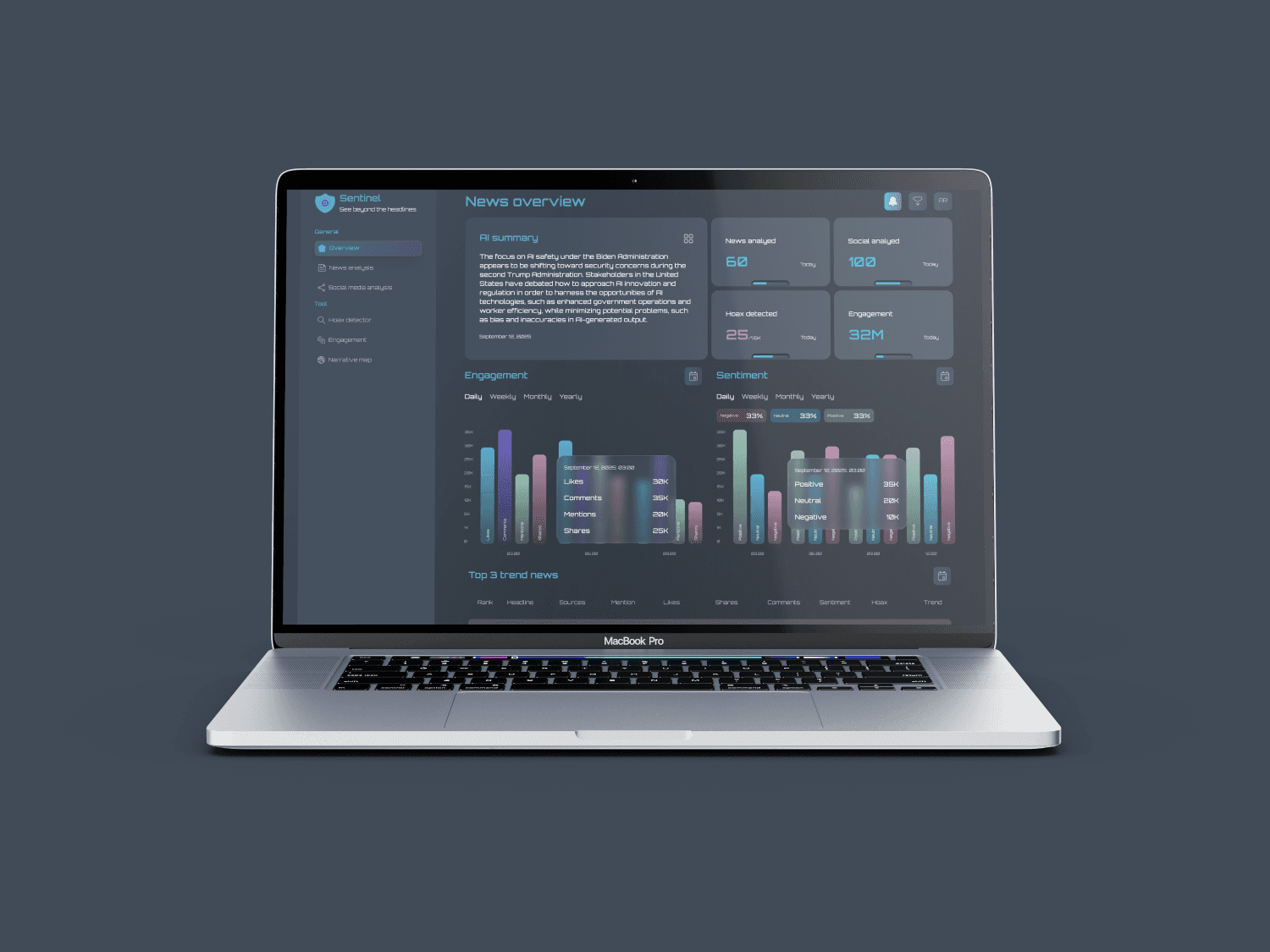

Dashboard Overview – The Command Center

Dashboard Overview – The Command Center

Imagine a control room where hundreds of news articles and thousands of social media conversations metric are collected into a single view

Imagine a control room where hundreds of news articles and thousands of social media conversations metric are collected into a single view

AI summary

Provides a quick snapshot: how many news items have been analyzed, how many from social media, and how many hoaxes were detected

Provides a quick snapshot: how many news items have been analyzed, how many from social media, and how many hoaxes were detected

Engagement metrics

Visualized through a bar chart (mentions, likes, shares, comments)

Visualized through a bar chart (mentions, likes, shares, comments)

Sentiment bar chart

Helps the team instantly see whether public opinion is leaning positive, negative, or neutral

Helps the team instantly see whether public opinion is leaning positive, negative, or neutral

Top 3 trending news table

Show the highest-performing news, complete with overall rank, headline, source, engagement, sentiment, hoax level, and a trendline

Show the highest-performing news, complete with overall rank, headline, source, engagement, sentiment, hoax level, and a trendline

Key pages

Key pages

News Card Page – The News Explorer

News Card Page – The News Explorer

After understanding the big picture, the team can dive deeper

After understanding the big picture, the team can dive deeper

AI news cards

Mini page of each article: image, source, sentiment, trendline, publication date, headline, hoax level, mentions, likes, comments, shares, and a detail button.

AI news cards

Mini page of each article: image, source, sentiment, trendline, publication date, headline, hoax level, mentions, likes, comments, shares, and a detail button.

Search & advanced filters

To find specific news items or refine by sentiment, hoax level, engagement, or source

To find specific news items or refine by sentiment, hoax level, engagement, or source

Pagination

Ensures smooth navigation even when hundreds of stories are streaming in daily

Ensures smooth navigation even when hundreds of stories are streaming in daily

Key pages

Key pages

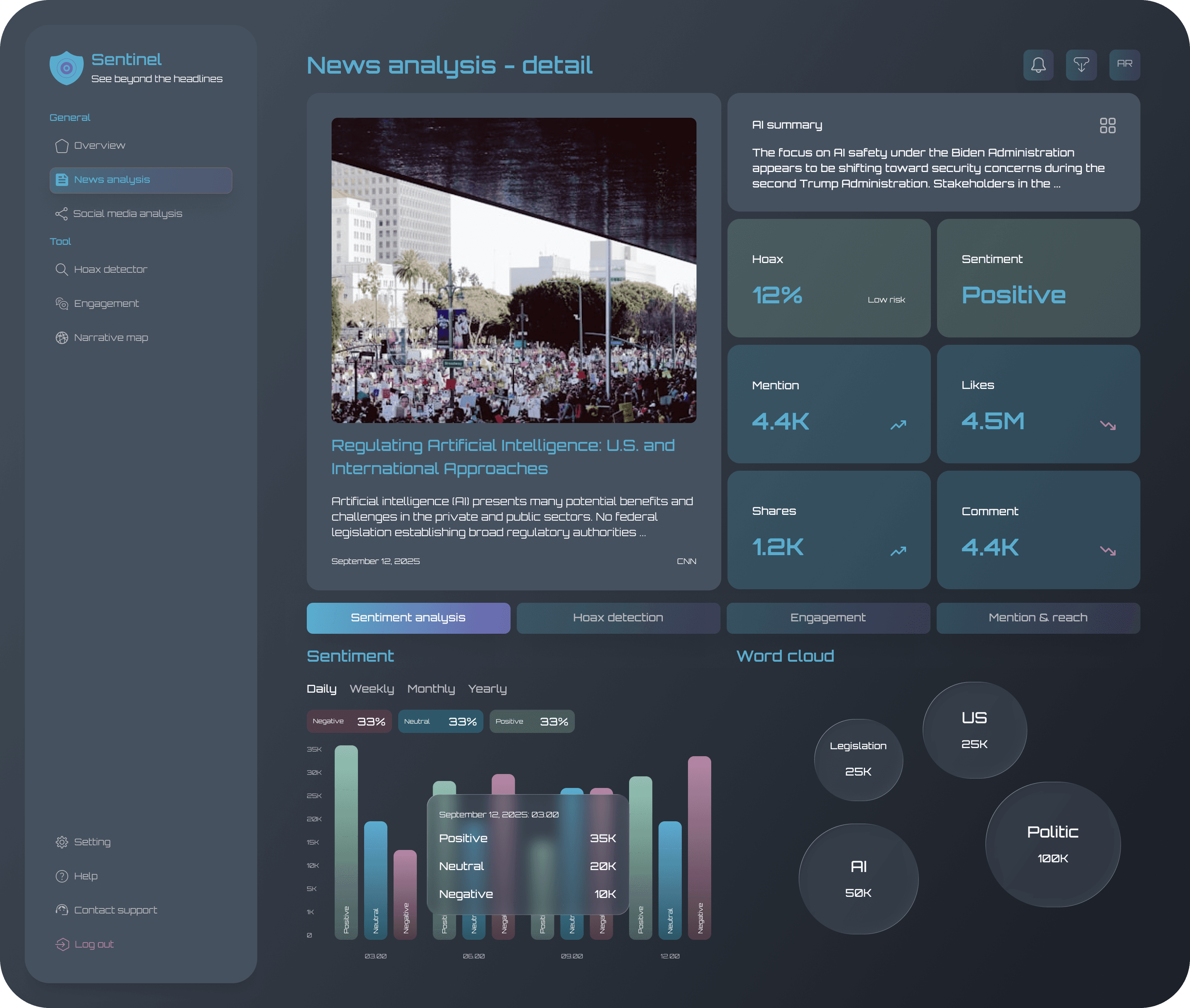

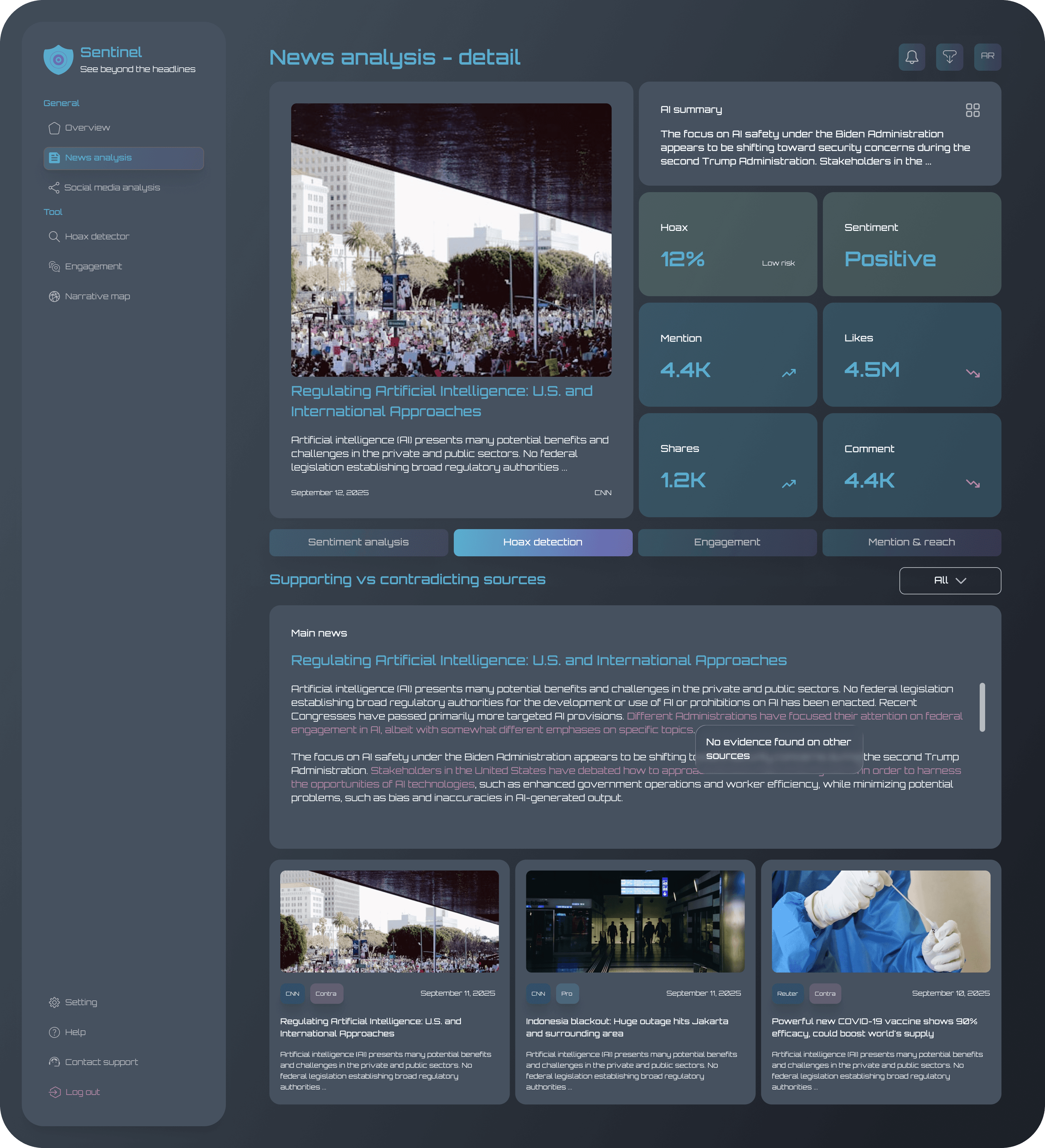

News Card Detail Page – The Deep Dive

News Card Detail Page – The Deep Dive

Every story has its own layers

Every story has its own layers

Hoax detection tab

Sentences flagged as questionable, plus a list of pro and contra sources from cross-checking

Sentences flagged as questionable, plus a list of pro and contra sources from cross-checking

Sentiment analysis tab

Sentiment bar chart + a word cloud of the most frequent terms

Sentiment bar chart + a word cloud of the most frequent terms

Engagement tab

Bar chart of interactions (likes, shares, comments, mentions)

Bar chart of interactions (likes, shares, comments, mentions)

Mention & reach tab

Line chart visualizing how often the story is mentioned and how far it spreads across social media

Line chart visualizing how often the story is mentioned and how far it spreads across social media

Important algorithm

Sentiment analysis algorithm

Goal: classify news or social media posts into Positive, Neutral, or Negative sentiment

01

Data collection

Crawl news articles & social media posts (X, FB)

Extract text content (headline, body, captions, comments)

02

Preprocessing (NLP)

Tokenization → breaking text into words/sentences

Stopword removal → remove common words (such as in, to, that)

Lemmatization/Stemming → convert words to their root form

Handle emoji/slang (specifically for social media)

03

Feature extraction

Bag-of-Words / TF-IDF → to capture word frequency

Embeddings (BERT/IndoBERT) → for semantic context

04

Sentiment classification

Modern (preferred): Transformer model (IndoBERT / mBERT

05

Output

Sentiment label: Positive, Neutral, Negative

Confidence score (%) → example: 78% Negative, 15% Neutral, 7% Positive

01

Data collection

Crawl news articles & social media posts (X, FB)

Extract text content (headline, body, captions, comments)

02

Preprocessing (NLP)

Tokenization → breaking text into words/sentences

Stopword removal → remove common words (such as in, to, that)

Lemmatization/Stemming → convert words to their root form

Handle emoji/slang (specifically for social media)

03

Feature extraction

Bag-of-Words / TF-IDF → to capture word frequency

Embeddings (BERT/IndoBERT) → for semantic context

04

Sentiment classification

Modern (preferred): Transformer model (IndoBERT / mBERT

05

Output

Sentiment label: Positive, Neutral, Negative

Confidence score (%) → example: 78% Negative, 15% Neutral, 7% Positive

01

Data collection

Crawl news articles & social media posts (X, FB)

Extract text content (headline, body, captions, comments)

02

Preprocessing (NLP)

Tokenization → breaking text into words/sentences

Stopword removal → remove common words (such as in, to, that)

Lemmatization/Stemming → convert words to their root form

Handle emoji/slang (specifically for social media)

03

Feature extraction

Bag-of-Words / TF-IDF → to capture word frequency

Embeddings (BERT/IndoBERT) → for semantic context

04

Sentiment classification

Modern (preferred): Transformer model (IndoBERT / mBERT

05

Output

Sentiment label: Positive, Neutral, Negative

Confidence score (%) → example: 78% Negative, 15% Neutral, 7% Positive

01

Data collection

Crawl news articles & social media posts (X, FB)

Extract text content (headline, body, captions, comments)

02

Preprocessing (NLP)

Tokenization → breaking text into words/sentences

Stopword removal → remove common words (such as in, to, that)

Lemmatization/Stemming → convert words to their root form

Handle emoji/slang (specifically for social media)

03

Feature extraction

Bag-of-Words / TF-IDF → to capture word frequency

Embeddings (BERT/IndoBERT) → for semantic context

04

Sentiment classification

Modern (preferred): Transformer model (IndoBERT / mBERT

05

Output

Sentiment label: Positive, Neutral, Negative

Confidence score (%) → example: 78% Negative, 15% Neutral, 7% Positive

Important algorithm

Hoax detector algorithm

Goal: evaluate credibility of a news item and flag potential misinformation

01

Claim extraction

NLP parses the text → identify “claims” (subject + predicate + object)

Example: “Government shuts down internet in city X."

02

Cross-source verification (Hybrid approach)

Trusted sources database (official news portals, government statements)

Fact-checking DB (e.g., Mafindo, Google Fact Check Tools)

Other major outlets (cross-check consistency)

03

Textual similarity check

Use embeddings (cosine similarity via BERT/Doc2Vec)

If multiple trusted sources contradict → raise credibility risk

04

Network propagation analysis (Social media)

Track how the claim spreads on social media

Was it first posted by unverified or anonymous accounts?

Does it spread mainly in closed echo chambers?

05

Scoring system

Combine multiple signals into Credibility Score (0–100)

Weight for trusted sources support

Weight for contradictions from fact-checkers

Weight if originated from suspicious/unverified accounts.

06

Scoring system tresholds

80–100 → Likely credible

40–79 → Medium risk

0–39 → High risk / potential hoax

07

Output

Credibility Score

Highlight suspicious sentences (e.g., “shuts down internet” flagged ⚠️)

Show “Supporting vs Contradicting Sources” in UI

01

Claim extraction

NLP parses the text → identify “claims” (subject + predicate + object)

Example: “Government shuts down internet in city X."

02

Cross-source verification (Hybrid approach)

Trusted sources database (official news portals, government statements)

Fact-checking DB (e.g., Mafindo, Google Fact Check Tools)

Other major outlets (cross-check consistency)

03

Textual similarity check

Use embeddings (cosine similarity via BERT/Doc2Vec)

If multiple trusted sources contradict → raise credibility risk

04

Network propagation analysis (Social media)

Track how the claim spreads on social media

Was it first posted by unverified or anonymous accounts?

Does it spread mainly in closed echo chambers?

05

Scoring system

Combine multiple signals into Credibility Score (0–100)

Weight for trusted sources support

Weight for contradictions from fact-checkers

Weight if originated from suspicious/unverified accounts.

06

Scoring system tresholds

80–100 → Likely credible

40–79 → Medium risk

0–39 → High risk / potential hoax

07

Output

Credibility Score

Highlight suspicious sentences (e.g., “shuts down internet” flagged ⚠️)

Show “Supporting vs Contradicting Sources” in UI

01

Claim extraction

NLP parses the text → identify “claims” (subject + predicate + object)

Example: “Government shuts down internet in city X."

02

Cross-source verification (Hybrid approach)

Trusted sources database (official news portals, government statements)

Fact-checking DB (e.g., Mafindo, Google Fact Check Tools)

Other major outlets (cross-check consistency)

03

Textual similarity check

Use embeddings (cosine similarity via BERT/Doc2Vec)

If multiple trusted sources contradict → raise credibility risk

04

Network propagation analysis (Social media)

Track how the claim spreads on social media

Was it first posted by unverified or anonymous accounts?

Does it spread mainly in closed echo chambers?

05

Scoring system

Combine multiple signals into Credibility Score (0–100)

Weight for trusted sources support

Weight for contradictions from fact-checkers

Weight if originated from suspicious/unverified accounts.

06

Scoring system tresholds

80–100 → Likely credible

40–79 → Medium risk

0–39 → High risk / potential hoax

07

Output

Credibility Score

Highlight suspicious sentences (e.g., “shuts down internet” flagged ⚠️)

Show “Supporting vs Contradicting Sources” in UI

01

Claim extraction

NLP parses the text → identify “claims” (subject + predicate + object)

Example: “Government shuts down internet in city X."

02

Cross-source verification (Hybrid approach)

Trusted sources database (official news portals, government statements)

Fact-checking DB (e.g., Mafindo, Google Fact Check Tools)

Other major outlets (cross-check consistency)

03

Textual similarity check

Use embeddings (cosine similarity via BERT/Doc2Vec)

If multiple trusted sources contradict → raise credibility risk

04

Network propagation analysis (Social media)

Track how the claim spreads on social media

Was it first posted by unverified or anonymous accounts?

Does it spread mainly in closed echo chambers?

05

Scoring system

Combine multiple signals into Credibility Score (0–100)

Weight for trusted sources support

Weight for contradictions from fact-checkers

Weight if originated from suspicious/unverified accounts.

06

Scoring system tresholds

80–100 → Likely credible

40–79 → Medium risk

0–39 → High risk / potential hoax

07

Output

Credibility Score

Highlight suspicious sentences (e.g., “shuts down internet” flagged ⚠️)

Show “Supporting vs Contradicting Sources” in UI

Reflection & next steps

Reflection & next steps